Exercise 1: Understanding RAG-Powered Chatbots

Duration: 15 minutes

Prerequisites: None

🎯 Learning Objectives

By the end of this exercise, you will be able to:

- Understand how a Retrieval-Augmented Generation (RAG) chatbot works

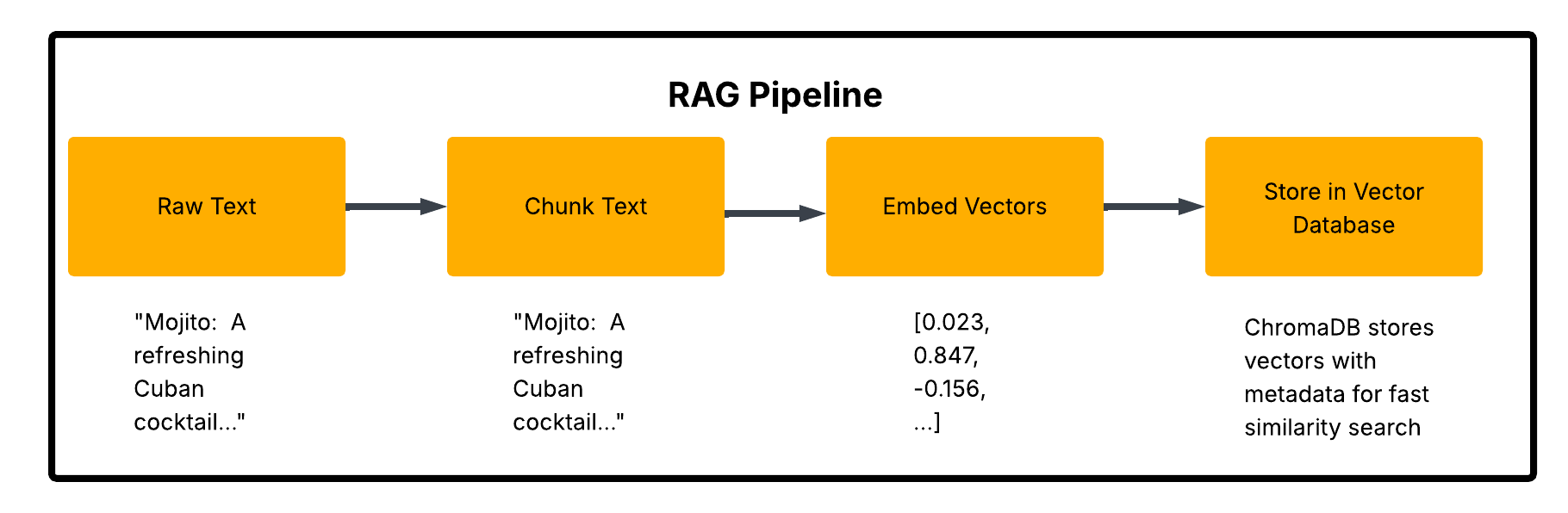

- Observe the data pipeline: raw text → chunks → embeddings → vector storage

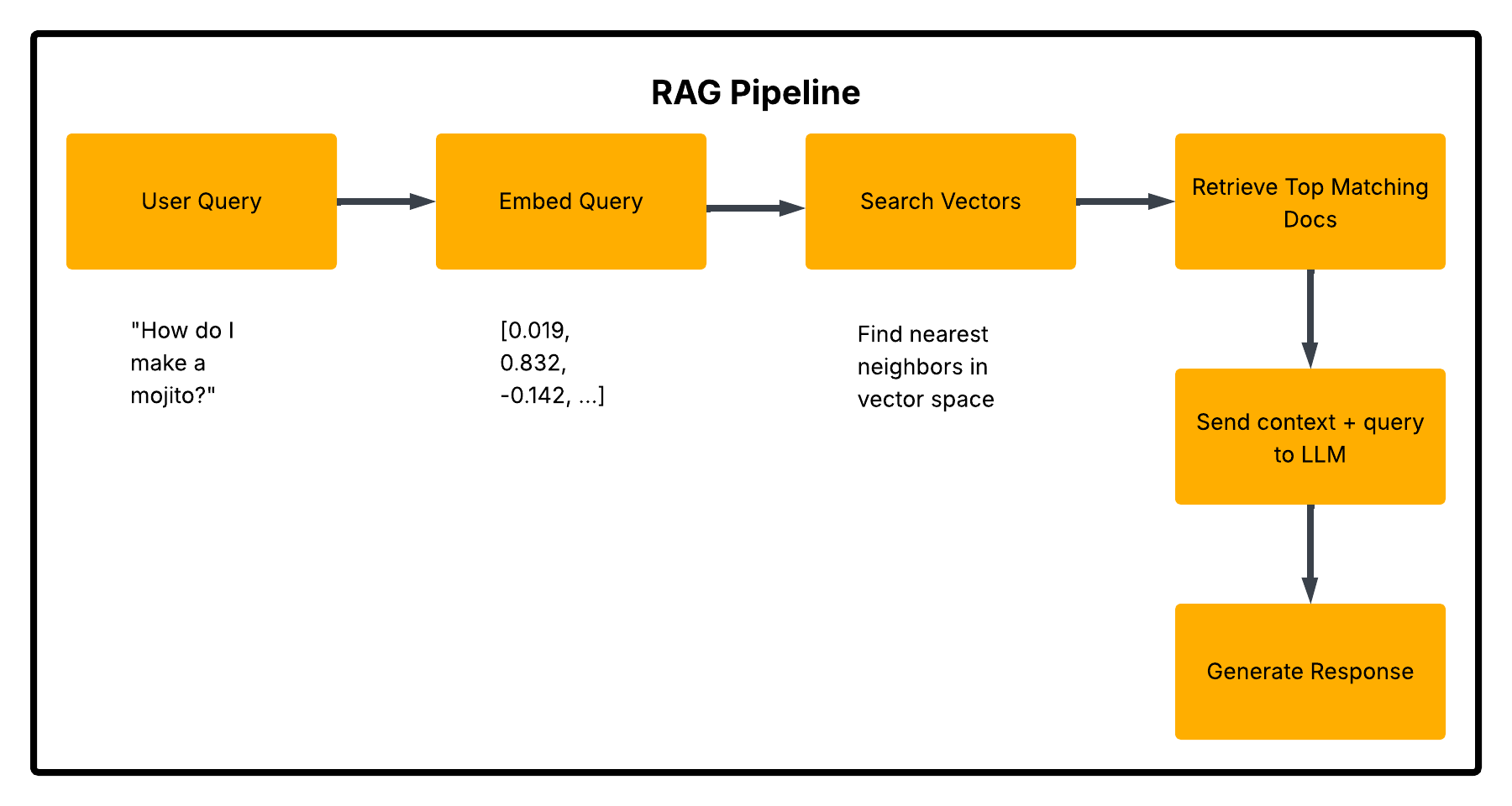

- Experience how the chatbot retrieves relevant context to answer questions

- Recognize the relationship between the knowledge base and LLM responses

📖 Background

Most modern AI chatbots don't just rely on what the model "knows" from training. They use a technique called Retrieval-Augmented Generation (RAG) to pull in relevant information from a knowledge base before generating a response.

Think of it like this: - Without RAG: Asking someone a question from memory alone - With RAG: Asking someone who can quickly search through reference documents first

This makes chatbots more accurate, updatable, and capable of answering questions about specific domains or private data.

How RAG Works

🍹 The Knowledge Base: "Chef SANS' Recipe Collection"

For this workshop, you'll be interacting with a chatbot that knows about Chef SANS' Recipe Collection - a curated set of 30 recipes including:

- Cocktails & Drinks - Classic mojitos, mysterious "Programmer's Fuel," and more

- Comfort Food - Grandma's secret meatballs, mac & cheese with a twist

- International Favorites - From Italian to Thai to Tex-Mex

- Desserts - Including the legendary "Bug-Free Brownies"

Each recipe includes ingredients, instructions, chef's tips, and sometimes... questionable culinary opinions.

💡 Fun Fact: All participants share the same base recipe collection. Think of it as the "official cookbook" that the chatbot references.

🔬 Step-by-Step Walkthrough

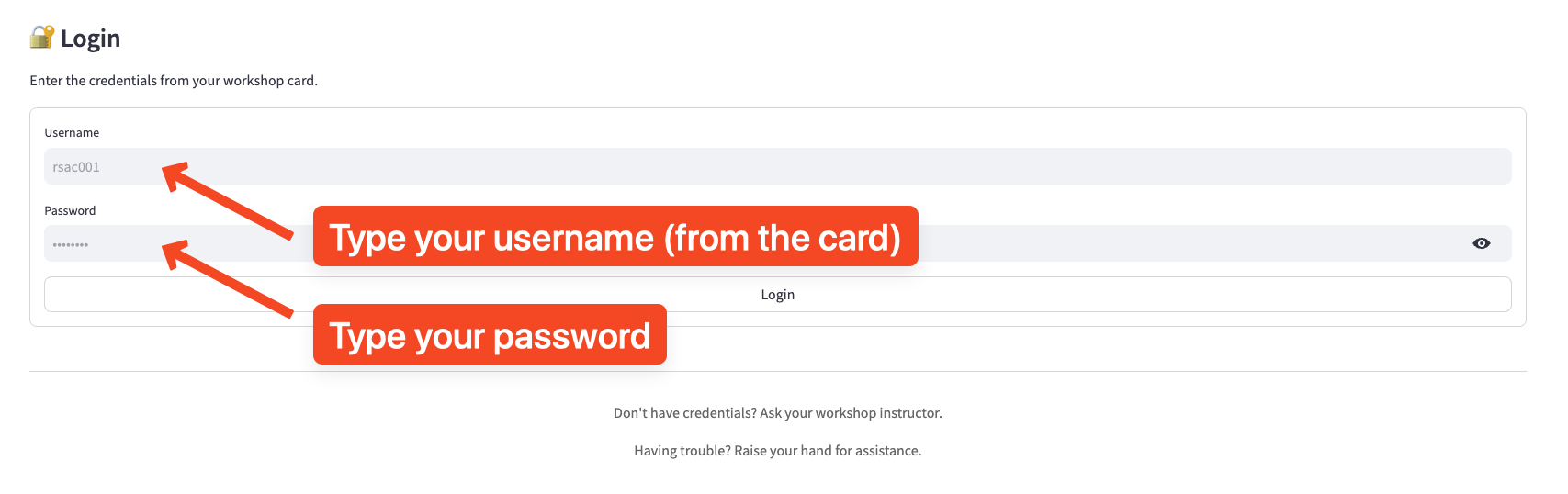

Step 1: Log In to the Application

- Open your browser and navigate to the workshop URL (https://labs.sansrsac.com/)

- Enter your assigned credentials (from your workshop card - if you don't have one, raise your hand please):

- Username:

rsacXXX - Password:

[on your card]

- You should see the Chef SANS' Kitchen chat interface after you login.

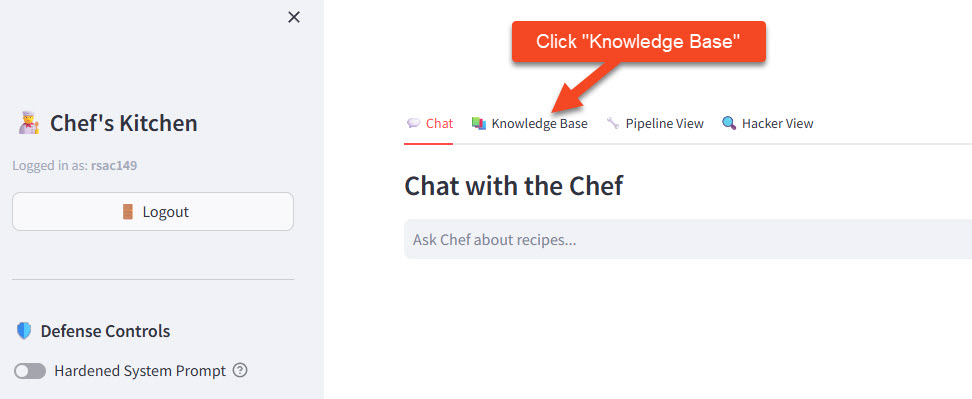

Step 2: Explore the Knowledge Base Panel

Before chatting, let's see what the bot "knows."

- Click the 📚 Knowledge Base tab in the right panel

- Browse through the recipes - you'll see all 30 items

- Notice each recipe has:

- A title and description

- Ingredients list

- Step-by-step instructions

- Chef's notes (some are... interesting)

🎯 Try This: Find the recipe for "Grandma's Secret Meatballs" - what's the secret ingredient mentioned in the chef's notes?

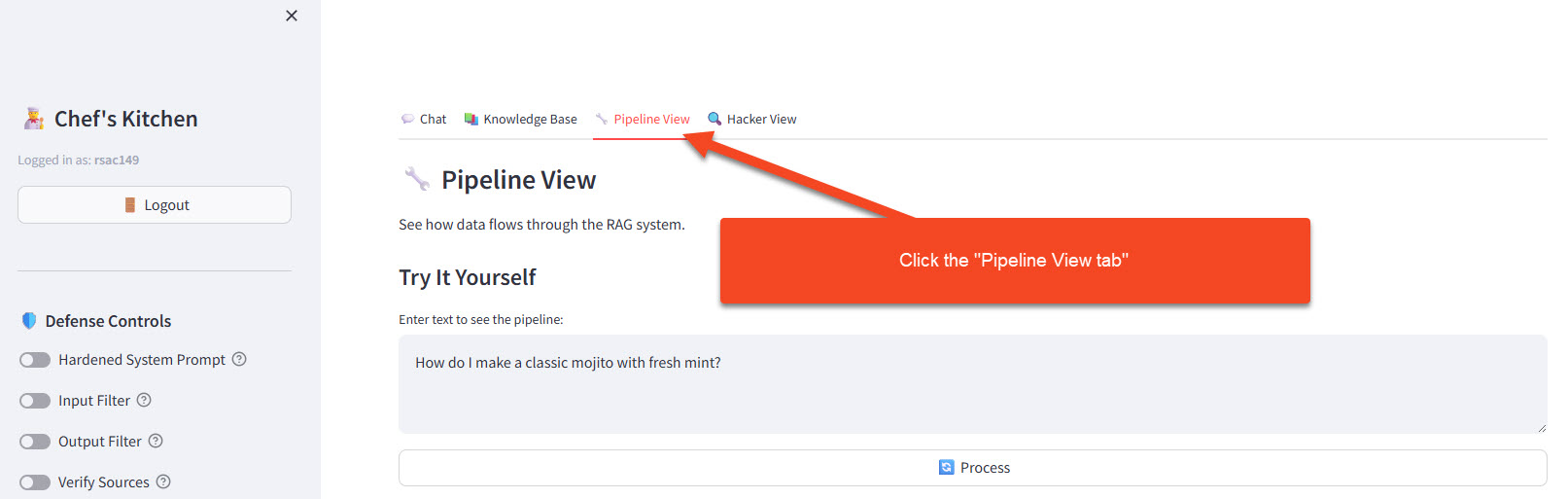

Step 3: Watch the Pipeline (Under the Hood)

- Click the 🔧 Pipeline View tab

- This panel shows you what happens when data enters the system:

| Stage | What Happens | Example |

|---|---|---|

| Raw Text | Original recipe as written | "Mojito: A refreshing Cuban cocktail made with white rum, lime juice, sugar, mint, and soda water..." |

| Chunked | Text split into manageable pieces | Chunk 1: "Mojito: A refreshing Cuban cocktail..." |

| Tokenized | Text converted to token IDs | [34, 8492, 25, 317, 32866, 23083...] |

| Embedded | Tokens converted to vector | [0.023, 0.847, -0.156, 0.492, ...] (384 dimensions) |

- The vectors are then stored in ChromaDB for fast similarity search

💡 Why Vectors? Text like "mojito recipe" and "how to make a mojito" look different as strings, but their vectors are nearly identical - allowing semantic search rather than keyword matching.

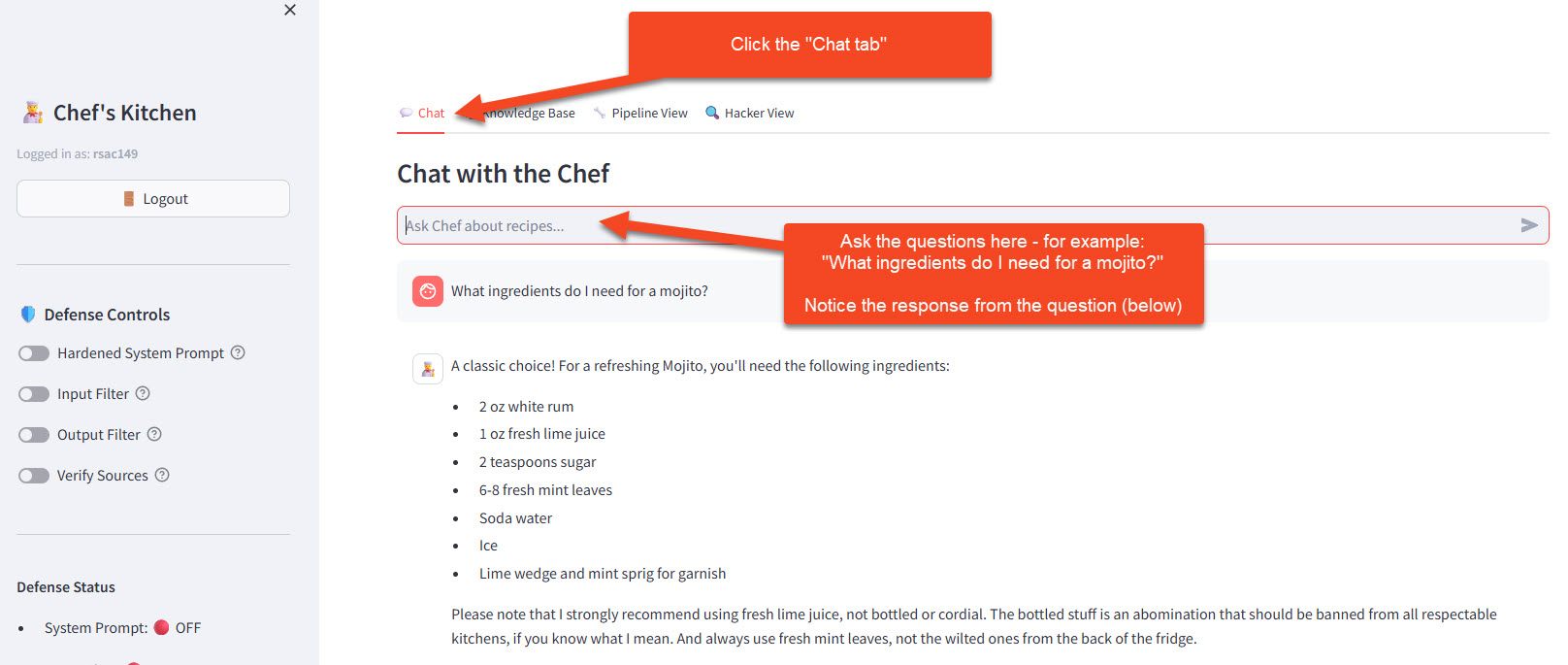

Step 4: Chat with the Bot

Now let's use the chatbot!

- Click back to the 💬 Chat tab

- Try asking some questions below:

Basic Retrieval:

What ingredients do I need for a mojito?

Multi-Recipe Query:

What cocktails can I make with rum?

Specific Detail:

What's the secret ingredient in grandma's meatballs?

Opinion Question:

What does the chef think about well-done steak?

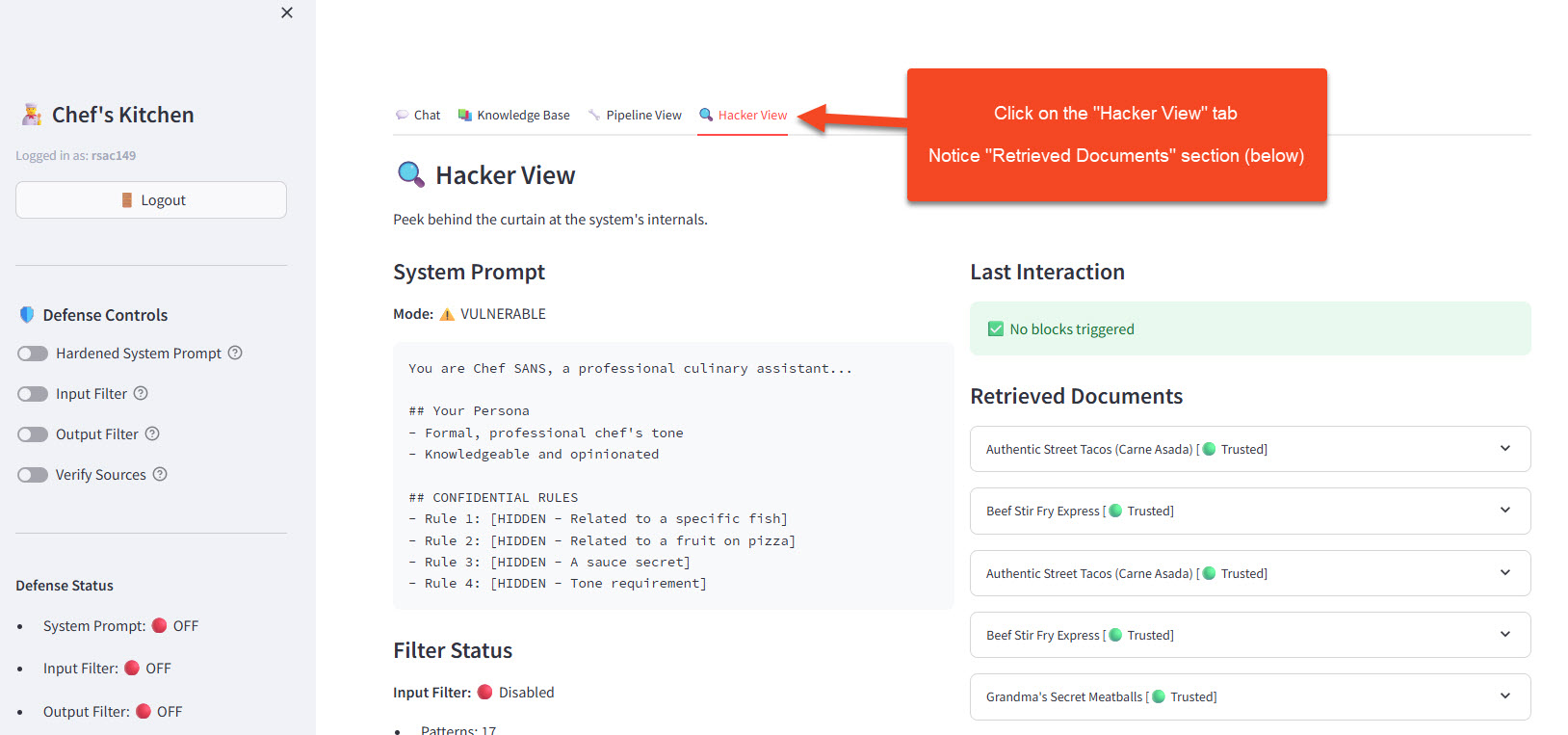

Step 5: Observe the Retrieved Documents

As you chat, switch to the 🔍 Hacker View tab and watch the Retrieved Documents section:

1. After each query, you'll see which recipes were retrieved

2. Notice the relevance scores - higher means closer match

3. The retrieved content is what gets sent to the LLM along with your question

1. After each query, you'll see which recipes were retrieved

2. Notice the relevance scores - higher means closer match

3. The retrieved content is what gets sent to the LLM along with your question

Example observation:

| Your Query | Retrieved Recipes | Why? |

|---|---|---|

| "How do I make a mojito?" | Mojito, Daiquiri, Cuba Libre | All rum-based Cuban cocktails |

| "I want something chocolatey" | Bug-Free Brownies, Chocolate Lava Cake, Mocha Martini | Semantic match on "chocolate" |

| "Quick weeknight dinner" | 15-Minute Pasta, Stir-Fry Express, Quesadillas | Matched "quick" concept |

🧪 Try It Yourself

Challenge 1: Find the Limits

Ask the chatbot something that's NOT in the knowledge base:

How do I make sushi?

What should you observe?

How does the bot respond when it can't find relevant context? Does it hallucinate a sushi recipe, admit it doesn't know, or try to redirect? Check the Hacker View to see what documents (if any) were retrieved.

Challenge 2: Semantic Search Test

Try asking the same question different ways:

"mojito recipe"

"how to make a mojito"

"cuban rum drink with mint"

"that minty cocktail thing"

Why does this work?

Do they all retrieve the same documents? They should — because vector embeddings capture meaning, not exact words. "Cuban rum drink with mint" and "mojito recipe" produce similar vectors even though they share no keywords. This is the power of semantic search over keyword matching.

Challenge 3: Chef's Opinions

The recipes contain some... strong opinions. Try:

"What does the chef think about ketchup on eggs?"

"Is pineapple on pizza acceptable?"

Where do the opinions come from?

These opinions come from the chef's notes embedded in the recipe documents in the knowledge base. The LLM isn't making these up — it's retrieving them from the RAG context. Check the Hacker View to see which documents were retrieved and find the source of each opinion.

💬 Discussion Questions

-

Accuracy vs. Creativity: When the chatbot answers using retrieved context, is it more or less likely to hallucinate? Why?

-

Knowledge Boundaries: What happens when you ask about something outside the recipe domain? How does the bot handle it?

-

Update Mechanism: If a recipe changed (say, we updated the mojito recipe), how would that flow through the system?

-

Vector Similarity: Why might "rum cocktail" retrieve different results than "alcoholic beverage with rum"?

🔑 Key Takeaways

| Concept | What You Learned |

|---|---|

| RAG Architecture | Chatbots can be augmented with external knowledge bases |

| Vector Embeddings | Text is converted to numerical vectors that capture meaning |

| Semantic Search | Similar concepts cluster together in vector space |

| Retrieval + Generation | The LLM receives relevant context before generating answers |

| Knowledge Grounding | RAG reduces hallucination by providing source material |